GLOSSATORY

an endless dictionary generated by a recurrent neural net

@GLOSSATORY is a bot which tweets absurd definitions generated by a recurrent neural network (RNN). The RNN was trained on a set of 82,115 definitions extracted from WordNet, a lexical database.

Even though it only samples and generates text one character at a time, the net has learned quite a lot about how dictionaries are written: parenthetical comments which qualify a definition by restricting its context, plausible date ranges, very common words like the names of countries, stylistic features which are specific to a particular subdomain.

I love the combination of superficial plausibility and phantom semantic depth in its output: it reminds me of Joyce or Ben Marcus' The Age of Wire and String.

ADDITIONAL: @GLOSSATORY now has a sibling on oulipo.social, that Mastodon instantiation which prohibits all posts containing a particular glyph, so if you want computational graph outputs without that, follow along at @GLOSSATORY@oulipo.social. Its output, which is simply that of its sibling, minus all posts containing that glyph, is not without charm:

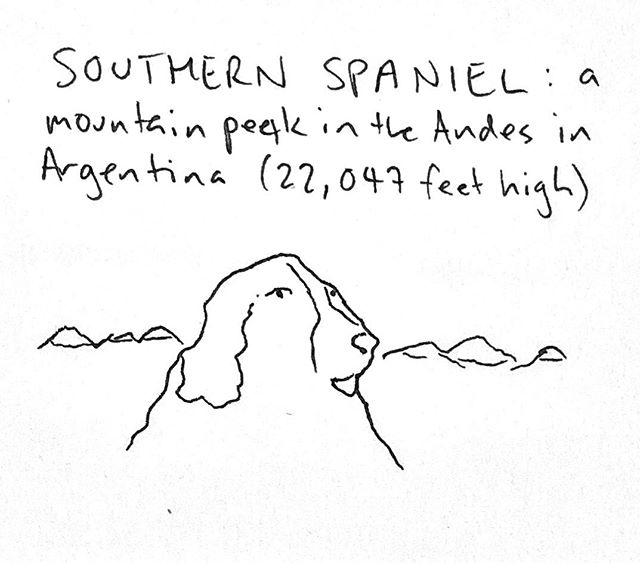

UPDATE: I've been illustrating selected posts from @GLOSSATORY since early 2019:

You can follow these on their own Mastodon account, @GLOSSATORY@weirder.earth

—Mike Lynch 2016-2019

Technical notes and credits

I built @GLOSSATORY with Justin Johnsons's Torch-RNN, an efficient recurrent neural net implemented in the Lua scientific framework Torch, after reading Andrej Karpathy's excellent blog post on the subject. The source code for the Python side of it is on my GitHub, although most of the action is in the torch-rnn code.

You can also download the new RNN itself (65M t7 file) - you'll need Torch-RNN to use it.

- Bird, Steven, Edward Loper and Ewan Klein (2009), Natural Language Processing with Python, O’Reilly Media Inc.

- Johnson, Justin (2016), Torch-RNN

- Karpathy, Andrej (2015), "The Unreasonable Effectiveness of Recurrent Neural Networks"

- Princeton University (2010), WordNet, Princeton University

- Thompson, Jeff (2016), Torch-RNN: Mac install